AI-Powered Attackers Outpace Patching: Bug Exploitation Now Top Google Cloud Attack Vector

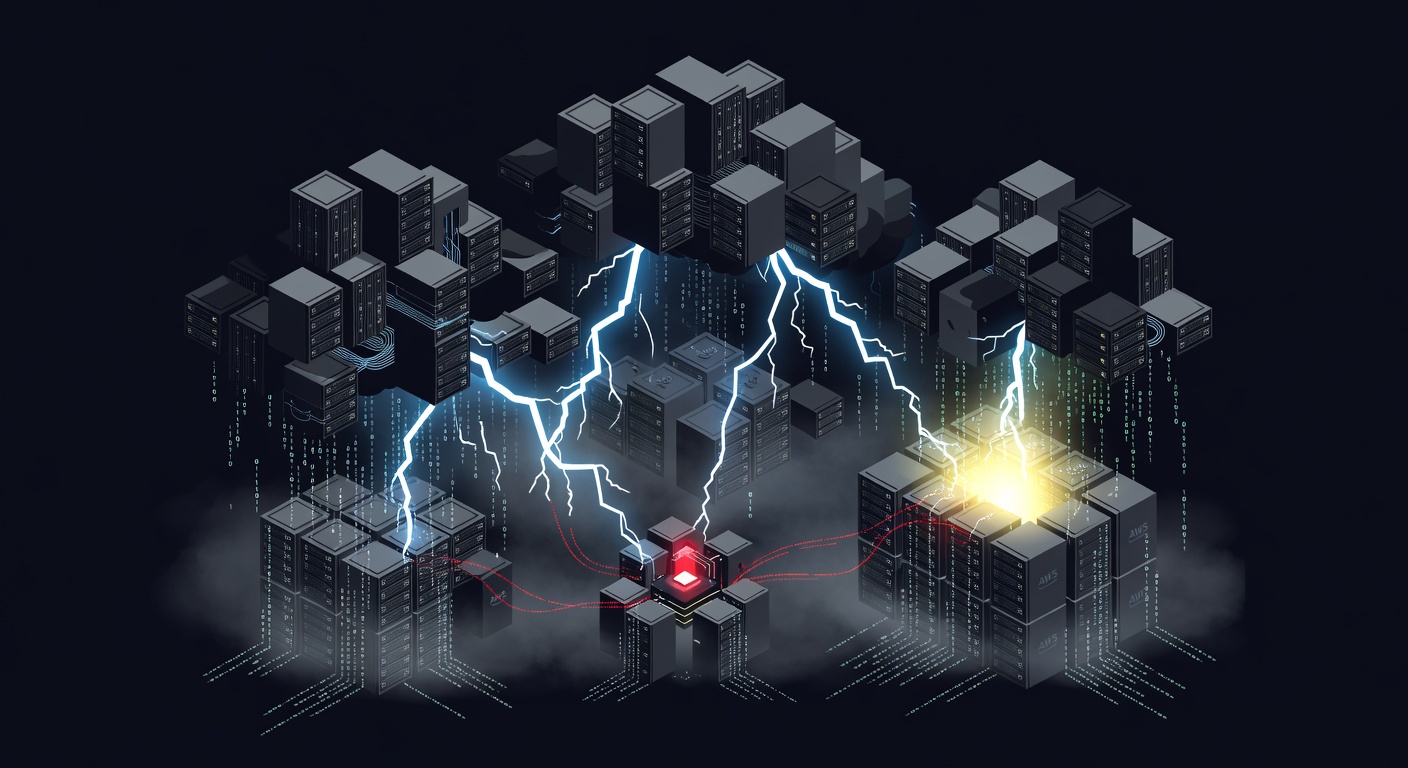

The cybersecurity landscape has shifted dramatically as artificial intelligence empowers attackers to exploit vulnerabilities faster than organizations can patch them, making bug exploitation the leading cause of Google Cloud compromises.

The Paradigm Shift in Cloud Security

The traditional narrative of cloud security breaches has been rewritten. For years, cybersecurity professionals have focused on preventing attacks through stolen credentials and misconfigured cloud services—and for good reason. These attack vectors dominated the threat landscape, representing the path of least resistance for cybercriminals seeking to infiltrate cloud infrastructure.

However, recent analysis of Google Cloud attacks reveals a fundamental shift in adversary tactics. Vulnerability exploitation has emerged as the primary attack vector, surpassing both credential theft and configuration errors. This transformation represents more than just a statistical anomaly; it signals a new era where artificial intelligence has fundamentally altered the economics and efficiency of cyber attacks.

The AI Acceleration Factor

Artificial intelligence has become the great equalizer in the cybersecurity arms race, but unfortunately, it's tilting the scales in favor of attackers. AI-powered tools now enable threat actors to identify, analyze, and exploit vulnerabilities with unprecedented speed and precision.

Traditional vulnerability research required significant human expertise and time investment. Security researchers would manually analyze code, reverse-engineer applications, and craft exploits through painstaking trial and error. This process created a natural buffer period during which organizations could identify and patch vulnerabilities before widespread exploitation.

AI has compressed this timeline dramatically. Machine learning algorithms can now scan codebases, identify potential vulnerability patterns, and even generate proof-of-concept exploits in a fraction of the time required by human researchers. Large language models trained on vast repositories of security research can synthesize new attack techniques by combining existing methodologies in novel ways.

Moreover, AI-powered fuzzing tools can automatically generate thousands of test cases to trigger unexpected application behavior, uncovering zero-day vulnerabilities that might otherwise remain hidden for months or years. These capabilities have created a scenario where attackers can move from vulnerability discovery to active exploitation faster than traditional patch management cycles can respond.

Technical Implications for Google Cloud Infrastructure

Google Cloud Platform's diverse service ecosystem presents an expansive attack surface that AI-enhanced adversaries can systematically probe. The platform's extensive use of containerized applications, microservices architectures, and API-driven integrations creates numerous potential entry points for exploitation.

Container orchestration platforms like Google Kubernetes Engine (GKE) are particularly attractive targets. These systems often run complex networking configurations and handle sensitive workload deployments. Vulnerabilities in container runtimes, orchestration APIs, or networking components can provide attackers with lateral movement capabilities across entire cloud environments.

The serverless computing model, exemplified by Google Cloud Functions, introduces additional complexity. While serverless architectures reduce infrastructure management overhead, they also create new categories of vulnerabilities related to function-to-function communication, event-driven triggers, and dependency management. AI-powered attackers can rapidly identify and exploit these architectural weaknesses.

Database services represent another critical attack surface. Google Cloud SQL, Firestore, and BigQuery instances often contain the most sensitive organizational data. Vulnerabilities in database engines, access control mechanisms, or query processing logic can provide attackers with direct access to confidential information.

Real-World Impact and Case Studies

The shift toward vulnerability exploitation has produced measurable consequences across various industries. Financial services organizations have reported increased attempts to exploit vulnerabilities in cloud-hosted trading platforms and customer data management systems. Healthcare providers have seen attacks targeting vulnerabilities in cloud-based electronic health record systems and medical device management platforms.

E-commerce companies have experienced particular challenges as attackers target vulnerabilities in payment processing workflows and customer data analytics platforms. These attacks often focus on exploiting race conditions in transaction processing logic or privilege escalation vulnerabilities in customer account management systems.

The manufacturing sector has not been immune, with attackers exploiting vulnerabilities in cloud-connected industrial IoT platforms and supply chain management systems. These attacks can have cascading effects, disrupting production schedules and compromising sensitive intellectual property.

The speed of AI-assisted exploitation has created scenarios where organizations discover they've been compromised only after attackers have already exfiltrated significant amounts of data or established persistent access to critical systems. Traditional incident response timelines, designed around human-paced attacks, are proving inadequate for AI-accelerated threats.

How to Protect Yourself

Defending against AI-powered vulnerability exploitation requires a fundamental reimagining of cybersecurity strategies. Organizations must adopt proactive, continuous security practices that can match the pace of AI-assisted attacks.

Implement Continuous Vulnerability Management: Replace traditional quarterly patch cycles with continuous monitoring and rapid response capabilities. Automated vulnerability scanners should run continuously, and critical patches should be deployed within hours rather than days or weeks.

Adopt Zero-Trust Architecture: Assume that vulnerabilities will be exploited and design systems that limit the potential impact of successful attacks. Implement micro-segmentation, least-privilege access controls, and continuous authentication mechanisms.

Leverage AI for Defense: Deploy AI-powered security tools that can match the capabilities of AI-assisted attackers. Machine learning-based intrusion detection systems can identify anomalous behavior patterns that might indicate exploitation attempts.

Enhance Network Security: Use VPN services like hide.me to encrypt network communications and obscure traffic patterns from potential attackers. VPNs provide an additional layer of protection by masking the true origin and destination of network connections, making reconnaissance more difficult for attackers.

Implement Runtime Application Security: Deploy runtime application security tools that can detect and block exploitation attempts in real-time, even for previously unknown vulnerabilities.

Establish Threat Intelligence Capabilities: Maintain awareness of emerging vulnerabilities and attack techniques through threat intelligence feeds and security research communities. This knowledge enables proactive defense preparation.

Regular Security Assessments: Conduct frequent penetration testing and vulnerability assessments to identify weaknesses before attackers do. Consider using AI-powered testing tools to match the capabilities of potential adversaries.

Frequently Asked Questions

How quickly can AI-powered attackers exploit new vulnerabilities compared to traditional methods?

AI-powered attackers can move from vulnerability discovery to active exploitation in hours or days, compared to the weeks or months typically required for traditional manual exploitation. This dramatic acceleration is due to AI's ability to automatically analyze code, generate exploits, and scale attacks across multiple targets simultaneously.

What makes Google Cloud particularly vulnerable to these AI-enhanced attacks?

Google Cloud's extensive service ecosystem and API-driven architecture provide numerous potential attack vectors that AI systems can systematically probe. The platform's widespread adoption means vulnerabilities can have broad impact, making it an attractive target. Additionally, the complexity of modern cloud applications creates subtle interaction vulnerabilities that AI systems excel at discovering.

Can traditional security measures still protect against AI-powered vulnerability exploitation?

Traditional security measures remain important but are insufficient on their own. While firewalls, antivirus software, and basic access controls provide foundational protection, they must be supplemented with AI-powered defense systems, continuous monitoring, and rapid response capabilities to effectively counter AI-enhanced threats. The key is layering multiple defensive strategies rather than relying on any single approach.